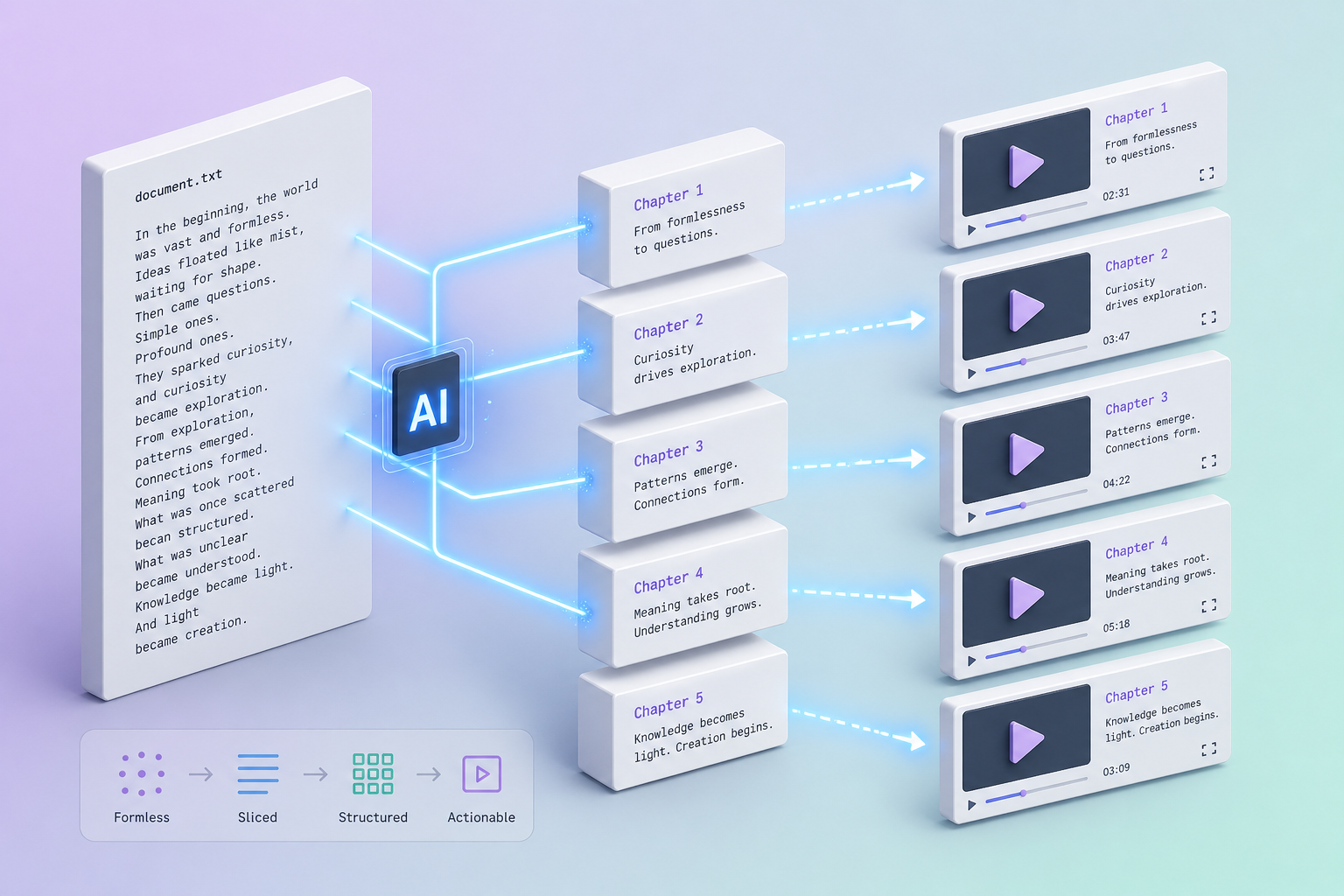

TXT to Video — Plain Text Becomes Structured AI Explainer Video

Drop a .txt file or paste raw text — drafts, transcripts, copied notes, AI outputs, journal entries. Vibeknow's AI auto-chapters unstructured text into scenes and outputs a 1080p video with voiceover, motion graphics, and subtitles. The simplest source format, the same finished quality.

TL;DR — who TXT to video works for

If you've got writing somewhere as plain text — anywhere from "transcript I dumped from Otter" to "first-pass essay draft" to "ChatGPT output I want to ship as video" — this page is for you.

- Podcast and YouTube hosts turning episode transcripts into video summaries.

- Solo writers and indie creators with text-heavy second-brain notes ready to be repurposed.

- Researchers and analysts dropping unformatted notes into a tool and getting a coherent video summary back.

- AI power users who generate long-form text with ChatGPT/Claude and want to ship video versions immediately.

- Speakers turning conference talk transcripts into evergreen video.

If your text is heavily structured (with explicit headings, lists, code), use Markdown to video or Word to video instead — those formats preserve more semantic detail.

Why most "text to video" tools produce slop

- No semantic boundaries. Without headings, naive tools either smash everything into one long scene or randomly chop at paragraph breaks.

- Pacing collapses. A long block of text + a single voice = a 12-minute monotone with no visual rhythm.

- No featured imagery. Nothing to anchor the hero scene; tools default to generic stock footage.

- Transitions are jarring. Without inferred topic shifts, scene changes happen at arbitrary moments that fight the narrative.

- Conversational filler. Transcript text contains "um," "you know," and tangents that get narrated verbatim.

How Vibeknow turns unstructured text into structured video

1. AI auto-chaptering finds the seams

The first parsing pass clusters sentences semantically — finds where topics shift even without explicit headings. Transition phrases ("now let's look at," "on the other hand") are detected and used as scene boundaries. The AI's proposed scene plan is editable, so you can move boundaries around if needed.

2. Filler and disfluency cleanup

Transcript noise — "um," "uh," repeated false-starts, off-topic tangents — is detected and excluded from the voiceover script by default. The original text stays available for review; we just don't read every "you know" aloud.

3. Visual rhythm built from scratch

For text without embedded images, Vibeknow generates motion graphics that match each scene's content — typography-driven scenes for quotes, animated diagrams for explanations, abstract visuals for transitions. The 40+ visual templates were designed by a team with 10+ years of editorial / explainer / documentary aesthetics, not slideshow vendors.

4. Pacing tuned to length

500-word source → ~3-minute video, ~6 scenes. 2,000 words → ~6 minutes, ~10 scenes. 5,000 words → ~12 minutes (or recommended 2-part split). The default rhythm keeps each scene 30–60 seconds — long enough to register, short enough to keep attention.

How to convert plain text to a video — step by step

Step 1 — Upload .txt or paste text

Drag a .txt file into Vibeknow or paste raw text directly into the editor. UTF-8 encoding works automatically; ASCII / Latin-1 / common encodings auto-detected. No specific formatting required — but if you can spend 30 seconds adding 4–5 section breaks (any consistent marker: ***, ---, ALL CAPS), the video pacing improves noticeably.

Step 2 — Review the auto-chaptered plan

Within about a minute, Vibeknow returns the inferred scene structure. This is where you confirm the AI's interpretation matches what you intended — adjust scene boundaries, drop sections that don't belong, pick a voice. For longer text (3K+ words), the scene plan is your single best opportunity to control pacing.

Step 3 — Generate, export

Click generate. The 1080p video is ready in 5–10 minutes — voiceover, motion graphics, subtitles. Export the MP4. For podcast-derived videos, also export the audio as MP3 (one click) for podcast feed compatibility.

Five TXT-to-video patterns we see most often

Podcast transcript → episode summary video

An hour-long podcast transcribed via Otter / Descript / Whisper becomes a 6-minute summary video for YouTube cross-posting. AI strips disfluencies, finds topic shifts, generates pacing. Replaces the "I'll cut a clip later" debt.

ChatGPT / Claude output → instant video

You ask the AI to draft an explainer essay; you paste the response into Vibeknow; 10 minutes later you have a video. The fastest source-to-video pipeline that exists, useful for content marketing teams testing topics quickly.

Notes app archive → video diary / video journal

A solo creator who's been writing daily journal entries in Apple Notes / Bear / Obsidian for years generates a video version of select entries. Particularly powerful for thinkers building a back-catalog before launching a YouTube channel.

Conference talk transcript → evergreen video

You gave a talk at a conference; the transcript exists. Vibeknow turns the transcript into a polished video version with motion graphics — better than the actual recording for distribution because pacing, visuals, and audio quality are controlled.

Research notes → analyst summary video

An analyst's raw research notes (5,000+ words of plain text) become a 7-minute summary video for client distribution. Saves the manual rewrite into a deck or memo.

Plain text fit table

| Source | Fit? | Notes |

|---|---|---|

| Long-form draft / essay (1K+ words) | ✅ Excellent | The native sweet spot for AI auto-chaptering. |

| Podcast / talk transcript | ✅ Excellent | Disfluency cleanup is built-in. |

| ChatGPT / Claude long-form output | ✅ Excellent | AI output usually has implicit structure the AI auto-chaptering catches. |

| Lecture notes / research notes | ✅ Yes | Add 4–5 section breaks for tighter pacing. |

| Email or letter | ✅ Yes | Works for narrative emails; less for transactional. |

| Twitter thread / very-short content | ⚠️ Below threshold | < 500 words is tight; 200 words rarely produces a coherent video. |

| Pure list / bullet dump | ⚠️ Add framing | A list of 20 things needs an intro + outro to land as video. |

| Highly technical text with code | ❌ Use Markdown | Switch to Markdown to video so code blocks render properly. |

Other source formats Vibeknow supports

- Document to video (overview) — the umbrella guide.

- Markdown to video — when your text is .md with headings.

- Word to video — for .docx with formatting.

- Notion to video — when content lives in Notion.

- Blog to video — when content is already published online.

FAQ

What kinds of plain text work as a video source?

Any text-only content: draft scripts, transcripts of recorded talks, copied notes, output from voice-memo transcription, ChatGPT outputs, journal entries, raw essay drafts. The looser the structure, the more Vibeknow's auto-chaptering does for you. Best results when content is at least 500 words; below that, the AI struggles to find clean scene boundaries.

How does AI auto-chaptering work?

Vibeknow's first parsing pass detects natural topic shifts in your text — paragraph breaks where the subject pivots, transition phrases ('Now let's look at...', 'On the other hand...'), and semantic clustering of sentences. The result is a proposed scene plan even when your source has no headings. You can adjust scene boundaries before generation if the AI's split doesn't match what you intended.

Should I add headings myself or let the AI infer them?

If your text already has explicit ALL-CAPS section breaks, asterisk dividers (***), or numbered headings (1. Section / 2. Section), Vibeknow uses those. If not, the AI infers boundaries — which works well for narrative content but can be choppy for highly technical text. The reliable rule: 30 seconds of adding 4–5 section breaks before upload almost always produces a tighter video than letting the AI guess.

How is this different from Word or Markdown to video?

Word and Markdown have explicit structure (headings, lists, code blocks) that Vibeknow respects literally. Plain text has no such markers, so the AI does more interpretive work: clustering sentences, inferring topic boundaries, deciding pacing. The video output is the same quality; the source-side prep is just leaner.

What's the maximum text length?

There is no hard cap. Most users paste 500 to 5,000 words and get a 4–7 minute video. For longer texts (10K+ words, e.g., a transcript of a 90-minute talk), we recommend either a 2-part series or pre-splitting the text into focused sections — a single 30-minute video has poor retention regardless of source quality.

Can I paste text directly without a file?

Yes. Vibeknow accepts pasted text in the editor — no need to save a .txt file first. Useful when your draft is in a chat window, a transcript service, or anywhere else; just copy and paste.

What about images for an unstructured text source?

Plain text has no embedded images, so Vibeknow generates AI motion graphics for every scene by default. If you have specific images you want to use (charts, photos, screenshots), upload them after the scene plan is generated and assign each to its target scene. The 40+ visual templates in our library cover most editorial / educational / explainer aesthetics out of the box.

Can I use my own voice for these videos?

Yes, on the Pro plan at $67/month and above. Voice cloning gives you consistent personal narration across every text-derived video, in 30+ languages. Useful for podcast hosts converting transcripts into video, journal-keepers publishing video diaries, or solo writers building a video library on top of years of plain-text drafts.

Convert your first .txt to video — free, no credit card

Drop a text file or paste a draft. Get a 1080p explainer video back in under 10 minutes.

Start free →